It’s Oct 23 and I’m finally getting around to writing a summer lab update that includes milestones, publications, conference activity for my lab. But it’s actually been quite a while (last update was 2022) so this is more than one year’s worth. Let’s start with the major milestones and awards, and then move on to new papers and research.

Major Milestones

Over the last year, we’ve seen the following major achievements:

- Tim Qui earned an MSc in in Neuroscience, July 2023, https://ir.lib.uwo.ca/etd/9384/

- Julia Ignaszewski earned an MSc in Psychology, September 2023, https://ir.lib.uwo.ca/etd/9863/

- Chelsea McKenzie earned an MSc in Psychology, Aug 2024, https://ir.lib.uwo.ca/etd/10428/

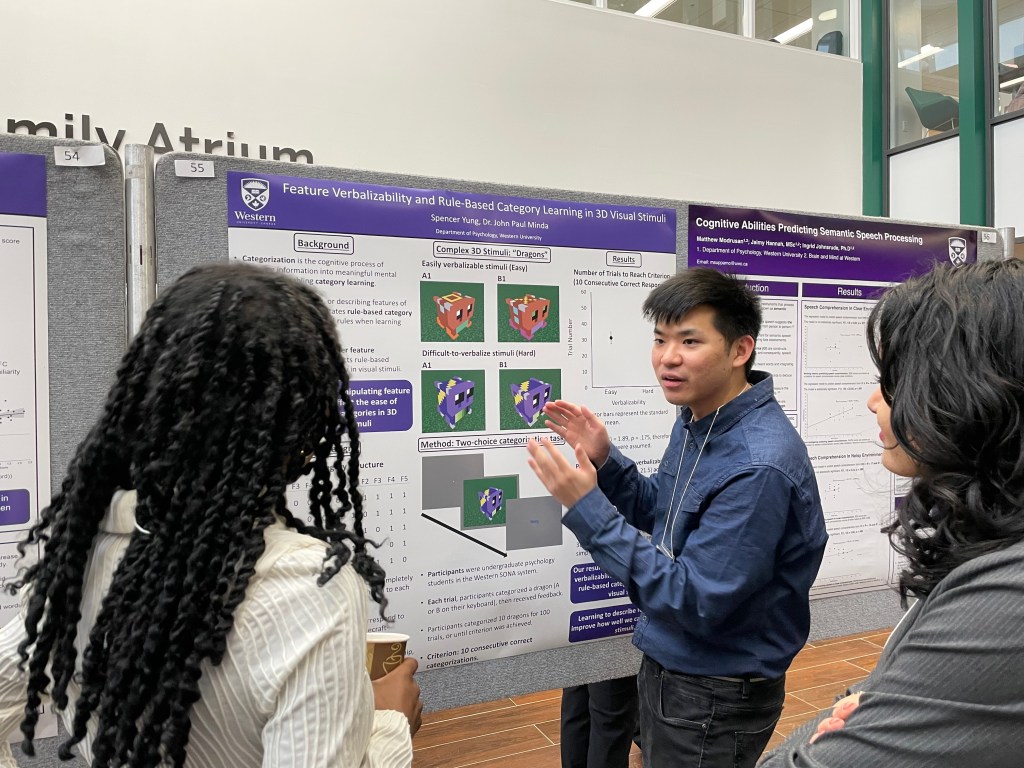

- Liza Kholwadwala and Spencer Yung completed an honours specialization in Psychology in 2024.

- Dr. Minda took on the role as Undergraduate Chair in the department of Psychology

Awards

The award-wining member of the Minda lab continue to be recognized for excellence!

- Anthony Cruz received the 2023 J. Frank Yates Award Supporting Diversity and Inclusion in Cognitive Psychology from the Psychonomic Society

- Anthony Cruz received the G. Keith Humphrey Award and the Social Science Graduate Alumni Award in 2024.

- Chelsea McKenzie received the Council of Canadian Departments of Psychology’s TA of the Year Award in 2024.

- Madeline Bloomberg received the Reva Gerstein Fellowship for Masters Study in Psychology in 2024.

- Dr. Minda received the Teaching Excellence Award from the Ontario Undergraduate Student Alliance (OUSA), 2023

- Dr. Minda received the Edward G. Pleva award in 2024 for Excellence in teaching, which is the University’s highest teaching honour.

New Lab Members

- Chelsea McKenzie is continuing on with a PhD in the lab, co-supervised by Dr. Jared

- Ardhra Maliakal and Lydia Tzianas are completing an honours specialization in our lab

- Ann Njeru, Maggie Chekan, Sierra Balram-Fredricks, Isabella Cullen, and Alexia Romita are working on independent study project with Minda lab members.

Research in 2023-2024

We published the following papers and preprints in 2023 and 2024 (I’ll write another update for some of these to talk about the work in more deatil)

- Minda, J. P., Roark, C. L., *Kalra, P. B., *Cruz, A. (2024). Single and multiple systems in categorization and category learning. Nature Reviews: Psychology, 3, 536–55

- *Cruz, A., & Minda, J. P. (2024). The spacing effect in remote information-integration category learning. Memory & Cognition, https://doi.org/10.3758/s13421-024-01569-w

- Azevedo, F., Pavlović, T., Rêgo, G. G., Ay, F. C., Gjoneska, B., Etienne, T. W., Minda, J. P., … Sampaio, W. M. (2023). Social and moral psychology of COVID-19 across 69 countries. Scientific Data, 10(1), 272

- *Kalra, P. B., Batterink, L. J., & Minda, J. P. (2024). Procedural and declarative knowledge simultaneously contribute to category response selection. https://osf.io/preprints/psyarxiv/fpq8y

- *Qiu, T. T., & Minda, J. P. (2023). Novel concentric-circle technique interrogates implicit category learning. https://doi.org/10.31234/osf.io/ps5aq

- *Ruiz Pardo, A. C., *McKenzie, C. M., *Khemani, N., & Minda, J. P. (2023). Bridging the WIERD gap in category learning: exploring an online-based solution. https://doi.org/10.31234/osf.io/hcedv

- *Kalra, P. B., Batterink, L. J., & Minda, J. P. (2024). Procedural and declarative category learning simultaneously contribute to downstream processes. In L. Samuelson, S. Frank, M. Toneva, A. Mackey, and E. Hazeltine (Eds.), Proceedings of the 46th Annual Conference of the Cognitive Science Society. Rotterdam, NL: Cognitive Science Society.

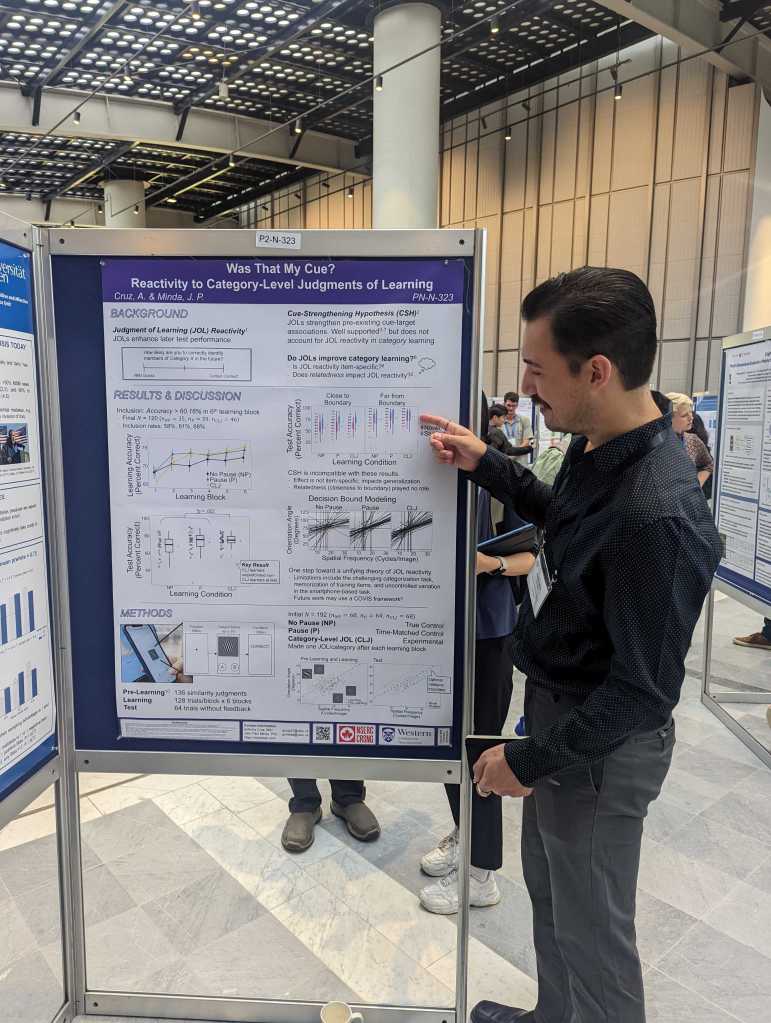

- *Cruz, A, & Minda, J. P. (2024). Was that my cue? Reactivity to category-level judgments of learning. In L. Samuelson, S. Frank, M. Toneva, A. Mackey, and E. Hazeltine (Eds.), Proceedings of the 46th Annual Conference of the Cognitive Science Society. Rotterdam, NL: Cognitive Science Society

Conference Activity

Very busy year for the Minda Lab!

- *Bloomberg, M., & Minda, J. P. (November 2024). Think global or think local: Attentional processing in category learning. Poster to be presented at the 65th Annual Meeting of the Psychonomic Society, New York, NY, USA.

- *Cruz, A. & Minda, J. P. (November 2024). Boost or bust? Role of JOLs in word pair recognition. Poster to be presented at the 65th Annual Meeting of the Psychonomic Society, New York, NY.

- *McKenzie, C. L., *Kholwadwala, L., Tzianas, L., Carrier, N., Anees, U., Romita, A., & Minda, J. P. (November 2024). Take me down to the prototype city, but which one? Differences in semantic representations of settlement between Canada and the United States. Poster presented at the 65th Annual Meeting of the Psychonomic Society, New York, NY, USA.

- *Cruz, A. & Minda, J. P. (July 2024). Was that my cue? Reactivity to category-level judgments of learning. poster presented at the Annual Meeting of the Cognitive Science Society, Rotterdam, NL.

- *Bloomberg, M., *Kalra, P., & Minda, J.P. (June 2024). Individual differences in implicit category learning. Poster presented at the 34th Annual Meeting of the Canadian Society for Brain, Behaviour, and Cognitive Science, Edmonton, AB, Canada.

- *McKenzie, C. M., & Minda, J. P. (June 2024). Text and the city: Extracting prototypes from lexical feature norms for settlement concepts. Poster presented at the 34th Annual Meeting of the Canadian Society for Brain Behaviour and Cognitive Science, Edmonton, AB, Canada.

- Vasudevan, V., Burke, S.M., MacDougall, A., Minda, J.P., & Tucker, P., Irwin, J. D. (February 2024). Exploring the effectiveness of an evidence-based mindfulness program for graduate students in Ontario, Canada. Talk presented at the Health and Rehabilitation Sciences Graduate Research Conference, London, ON, Canada.

- *Kalra, P. & Minda, J. P. (November 2023). Individual differences in interaction between memory systems. Talk presented at the 64th Annual Meeting of the Psychonomic Society, San Francisco, CA.

- *McKenzie, C. L. & Minda, J. P. (November 2023). Urban mindscapes: Exploring the conceptual structure of where we live. Poster presented at the 64th Annual Meeting of the Psychonomic Society, San Francisco, CA.

- *Cruz, A. & Minda, J. P. (November 2023). Give me a break: Pausing to reflect may lessen attention attenuation in massed learning. Poster presented at the 64th. Annual Meeting of the Psychonomic Society, San Francisco, CA.

- *Cruz, A. & Minda, J. P. (July 2023). Pram-ccaping: Efficient similarity data collection through trial reduction. Poster presented at the 33rd Annual Meeting of the Canadian Society for Brain, Behaviour, and Cognitive Sciences, Guelph, ON.

- *McKenzie, C. L. & Minda, J. P. (July 2023). Exploring the conceptual structure of the city. Poster presented at the 33rd Annual Meeting of the Canadian Society for Brain, Behaviour, and Cognitive Sciences, Guelph, ON.

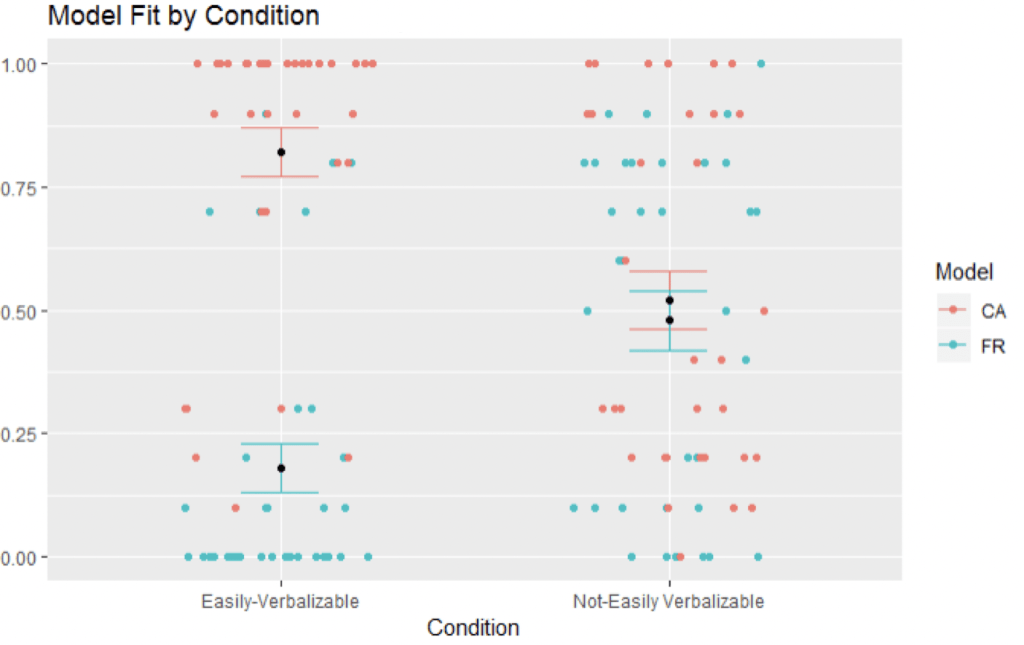

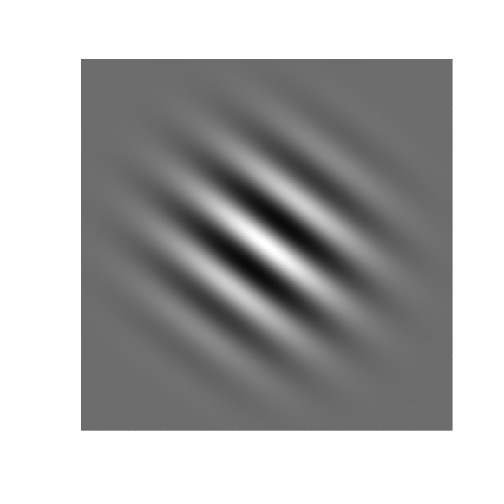

learned to classify shapes known as Gabor Patches (seen to the right), which are fairly common in visual perception research. Two features varied on these stimuli: the orientation of the alternating light and dark bands and the spatial frequency of the light and dark bands. We created a whole set of these and the figure below shows how these stimuli were arranged according to variance along the Orientation and Frequency dimensions. The optimal categorization strategy for this set was the conjunctive rule that combined frequency and orientation. However, it was also possible to learn this category less than perfectly with one of two sub-optimal, single-dimensional rules that were easier to acquire. In the example below, if a person only used frequency, it would allow them to classify all the B items, along with the items in the A1 and A3 regions, but they would misclassify the items in the A2 region because just using frequency does not distinguish between those two. In this case, participant would make errors on those stimuli until they transitioned to the optimal rule.

learned to classify shapes known as Gabor Patches (seen to the right), which are fairly common in visual perception research. Two features varied on these stimuli: the orientation of the alternating light and dark bands and the spatial frequency of the light and dark bands. We created a whole set of these and the figure below shows how these stimuli were arranged according to variance along the Orientation and Frequency dimensions. The optimal categorization strategy for this set was the conjunctive rule that combined frequency and orientation. However, it was also possible to learn this category less than perfectly with one of two sub-optimal, single-dimensional rules that were easier to acquire. In the example below, if a person only used frequency, it would allow them to classify all the B items, along with the items in the A1 and A3 regions, but they would misclassify the items in the A2 region because just using frequency does not distinguish between those two. In this case, participant would make errors on those stimuli until they transitioned to the optimal rule.